Build and train your neural network

Contents

Build and train your neural network¶

Aim(s) for this section 🎯¶

get practical experience with

ANNs, specificallyCNNsbuilt,trainandevalauteaCNNdiscuss important building blocks and learn how to interpret outcomes

Outline for this section 📝¶

The tutorial dataset

preparing the data

BuildingandtraininganANN- a2D CNNexamplepythonanddeep learningdefining the basics

building an

ANNhow to train your network

EvaluatinganANNThe

test setConfusion matrixGeneralizationTransfer learning

import random

random.seed(0)

The tutorial dataset¶

In order to demonstrate how you can build and train an ANN we need a dataset that fits several requirements:

we are all here with

laptopsthat most likely don’t have the computational power ofHPCs and graphic cards (if you do, good for you!), thus the dataset needs to be small enough so that we can actually train ourANNwithin a short amount of time and withoutGPUsthinking this further: we also might not want to test the most simplest

ANN, but one with a fewhidden layersit would be cool to use a

datasetwith at least some real world feeling to demonstrate a somewhat typical workflow

We thus decided on a small fMRI dataset from Zhang et al. with the following specs:

two resting-state sessions from

48participantsone with

eyes-closedand one witheyes-openwe will use a subset of

volumesof each session

This will allow us to:

address a (somewhat) realistic

image processingtask viasupervised learningfor which we can employ aCNNshowcase how parameters might change the

ANNevaluate

representationsacrosslayers

A note on the datasets utilized here:

we’re very sorry that it’s so

(f)MRIfocusedwe tried to include other modalities, specifically microscopy, but:

couldn’t find datasets that fit the setup and framework of the workshop

don’t have enough experience with this modality to adapt existing ones

however, we collected a few resources on

machineanddeep learningfor microscopy herecontain a variety of pre-trained models

info on how to prepare data

we also tested and checked a few things in advance so that we can help you during the hands-on in the best way possible

https://media4.giphy.com/media/rvDtLCABDMaqY/giphy.gif?cid=ecf05e47c5qyer72l87resjeadw2zu6kdoqq1b6guo8gqr9d&rid=giphy.gif&ct=g

https://media4.giphy.com/media/rvDtLCABDMaqY/giphy.gif?cid=ecf05e47c5qyer72l87resjeadw2zu6kdoqq1b6guo8gqr9d&rid=giphy.gif&ct=g

that being said, let’s gather our

dataset

import urllib.request

url = 'https://github.com/miykael/workshop_pybrain/raw/master/workshop/notebooks/data/dataset_ML.nii.gz'

urllib.request.urlretrieve(url, 'dataest_ML.nii.gz')

('dataest_ML.nii.gz', <http.client.HTTPMessage at 0x7fe3a7f6f370>)

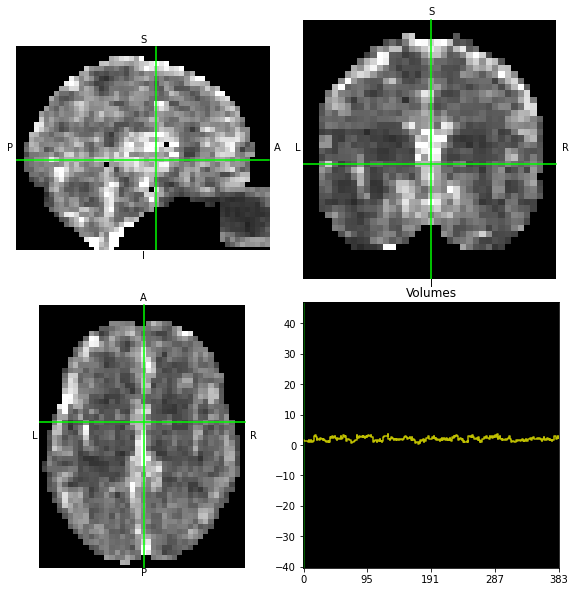

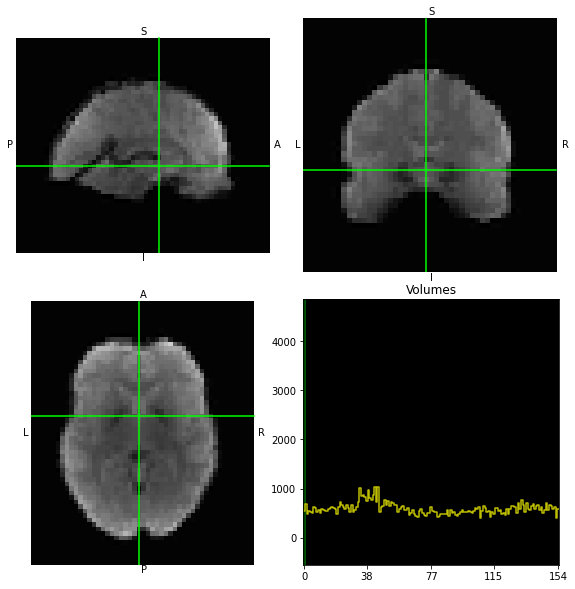

and check its dimensions as well as visually inspect it:

import nibabel as nb

data = nb.load('dataest_ML.nii.gz')

data.shape

(40, 51, 41, 384)

data.orthoview()

<OrthoSlicer3D: dataest_ML.nii.gz (40, 51, 41, 384)>

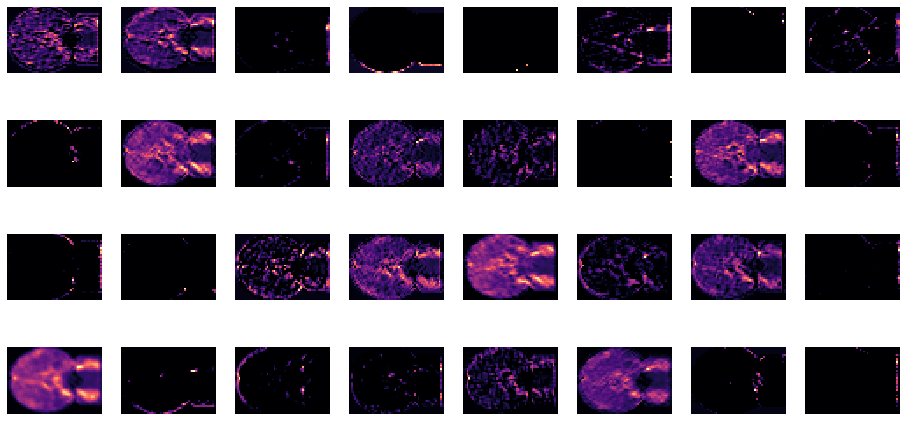

We can also plot the mean image across time to get an idea about signal variation:

from nilearn.image import mean_img

from nilearn.plotting import view_img

/Users/peerherholz/anaconda3/envs/ml-dl-synage/lib/python3.8/site-packages/nilearn/datasets/__init__.py:86: FutureWarning: Fetchers from the nilearn.datasets module will be updated in version 0.9 to return python strings instead of bytes and Pandas dataframes instead of Numpy arrays.

warn("Fetchers from the nilearn.datasets module will be "

view_img(mean_img(data))

Well well well, there should be something in there that an ANN can learn…

the task:

we know that there are

imageswhere participants had theireyes openorclosedwe now want to

buildanANNtotrainit to recognize and distinguish the respectiveimageswe also want to know what representations our

ANNlearnsthus, we have a

supervised learning problemwhich we want to solve viaimage processing

what we need to do:

prepare the data

decide on a model, build and train it

Preparing the data

From our adventures in "classic" machine learning we know, that we need labels to address a supervised learning problem. Checking the dimensions of our dataset again:

data.shape

(40, 51, 41, 384)

We see that we have a 4 dimensional dataset, with the first three dimensions being spatial, i.e. x, y and z, and the fourth being time. So we need to specify during which of the images participants had their eyes closed and during which they had their eyes open. Without going into further detail, we know that it’s always 4 volumes of eyes closed, followed by 4 volumes of eyes open, etc. and given that we have 48 participants, we can define our labels as follows:

import numpy as np

labels = np.ravel([[['closed'] * 4, ['open'] * 4] for i in range(48)])

labels[:20]

array(['closed', 'closed', 'closed', 'closed', 'open', 'open', 'open',

'open', 'closed', 'closed', 'closed', 'closed', 'open', 'open',

'open', 'open', 'closed', 'closed', 'closed', 'closed'],

dtype='<U6')

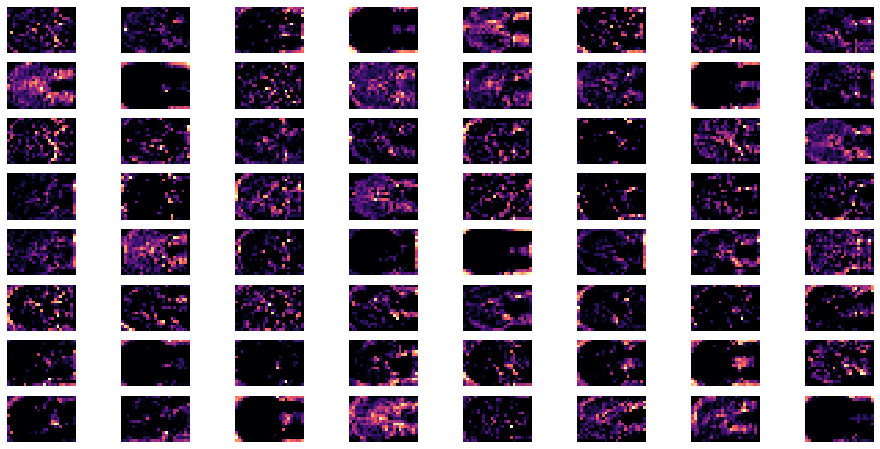

Going back to the aspect of computation time and resources, as well as given that this is a showcase, it might be a good idea to not utilize the entire fMRI volume, but only certain parts where we expect some things to happen. (Please note: this is of course a form of inductive bias comparable to feature engineering in "classic" machine learning and something you won’t do in a “real-world situation” (depending on the data and goal of course)).

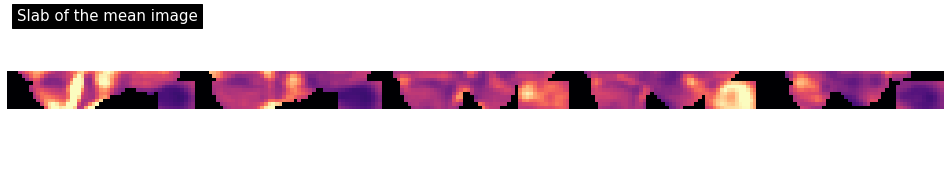

In our case, we could try to not train the neural network only on one very thin slab (a few slices) of the brain. So, instead of taking the data matrix of the whole brain, we just take 2 slices in the region that we think is most likely to be predictive for the question at hand.

We know (or suspect) that the regions with the most predictive power are probably somewhere around the eyes and in the visual cortex. So let’s try to specify a few slices that cover those regions.

So, let’s try to just take a few slices around the eyes:

from nilearn.plotting import plot_img

plot_img(mean_img(data).slicer[...,5:-25], cmap='magma', colorbar=False,

display_mode='x', vmax=2, annotate=False, cut_coords=range(0, 49, 12),

title='Slab of the mean image');

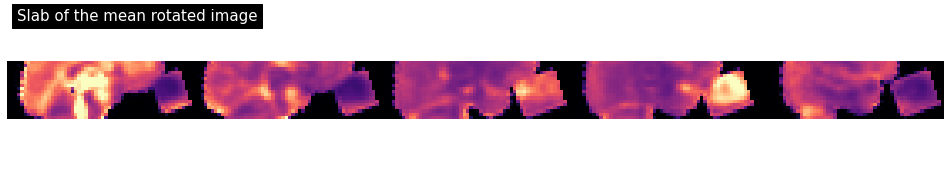

This worked only so and so, but with a few lines of code the mighty power of python and its packages can help us achieve a better training dataset. For example, we could rotate the volume (depending on the data and goal, this sort of image processing is actually sometimes done in “real-world situations”):

# Rotation parameters

phi = 0.35

cos = np.cos(phi)

sin = np.sin(phi)

# Compute rotation matrix around x-axis

rotation_affine = np.array([[1, 0, 0, 0],

[0, cos, -sin, 0],

[0, sin, cos, 0],

[0, 0, 0, 1]])

new_affine = rotation_affine.dot(data.affine)

Now we can use this new affine to resample our volumes:

from nilearn.image import resample_img

new_img = nb.Nifti1Image(data.get_fdata(), new_affine)

img_rot = resample_img(new_img, data.affine, interpolation='continuous')

How do our volumes look now?

plot_img(mean_img(img_rot).slicer[...,5:-25], cmap='magma', colorbar=False,

display_mode='x', vmax=2, annotate=False, cut_coords=range(0, 49, 12),

title='Slab of the mean rotated image');

Coolio! Now we can check what set of slices of our volumes might constitute feasible inputs to our ANN:

from nilearn.plotting import plot_stat_map

img_slab = img_rot.slicer[..., 12:15, :]

plot_stat_map(mean_img(img_slab), cmap='magma', bg_img=mean_img(img_slab), colorbar=False,

display_mode='x', vmax=2, annotate=False, cut_coords=range(-20, 30, 12),

title='Slices of the rotated image');

Now this is something we can definitely work with, even if we have only limited time and resources.

Building and training an ANN - a 2D CNN example¶

Not that we have checked and further prepared our dataset, it’s finally time to get to work. Given that we’re working with fMRI volumes, i.e. images and what we’ve heard about the different ANN architectures, using a CNN might be a good idea.

But where to start? Is there any software I can use that makes the building, training and evaluating of ANNs “comparably easy”?

Well, say no more…Python obviously also has your back when it’s about deep learning (gotta love python, eh?)! It actually has not only but a bunch of different packages that focus on deep learning. Let’s have a brief look on the things that are out there.

Python and deep learning¶

As outlined before python is a very powerful all purpose language, including a broad user base and support for machine learning, both “classic” and deep learning.

https://miro.medium.com/max/1400/1*RIrPOCyMFwFC-XULbja3rw.png

https://miro.medium.com/max/1400/1*RIrPOCyMFwFC-XULbja3rw.png

lots of well

documentedandtestedlibrarieslots of

tutorialsto learn things (you + theANN):youtube videos

blog posts

other open workshops

jupyter notebooks

lots of

pre-trained modelsto use for your researchlots of support in forums

completely free and open source!

https://miro.medium.com/max/700/1*s_BwkYxpGv34vjOHi8tDzg.png

https://miro.medium.com/max/700/1*s_BwkYxpGv34vjOHi8tDzg.png

all work a bit different, but the basic concepts and steps are comparable

nevertheless: always check the documentation as e.g.

default valuesmight vary

crucial in all: tensors

have a look at this great introduction to tensors from tensorflow

the question which one to choose is of course not an easy one and might also depend on external factors:

the type and amount of data you have

the time and computational resources available to you

specific functionality that only exists in a certain package

utilization of pre-trained

ANNswhat you’ve heard about and others show you (that’s obviously on us…)

here we will use keras which is build on top of tensorflow because:

high-level

APIeasy to grasp implementation of

ANNbuilding blocksfast experimentation

for a fantastic resource that includes all things we talked about/will talk and way more in much greater detail, please check the deep learning part of Neuromatch Academy

important: we’re not saying that

keras/tensorflowis better than the otherpython deep learning libraries, it just works very well for tutorials/workshops like the one you’re currently at given the very limited time we have

Now it’s finally go time, get your machines ready!

https://c.tenor.com/1cbzhT0TKTMAAAAd/cat-asleep.gif

https://c.tenor.com/1cbzhT0TKTMAAAAd/cat-asleep.gif

Defining the basics¶

Before we can actually assemble our ANN, we need to set a few things. However, first things first: importing modules and classes:

from tensorflow.python.keras.models import Sequential

from tensorflow.python.keras.layers import Conv2D, MaxPooling2D, AvgPool2D, BatchNormalization

from tensorflow.python.keras.layers import Activation, Dropout, Flatten, Dense

from tensorflow.keras.optimizers import Adam

Next, we need to take a look at our dataset again, specifically its dimensions:

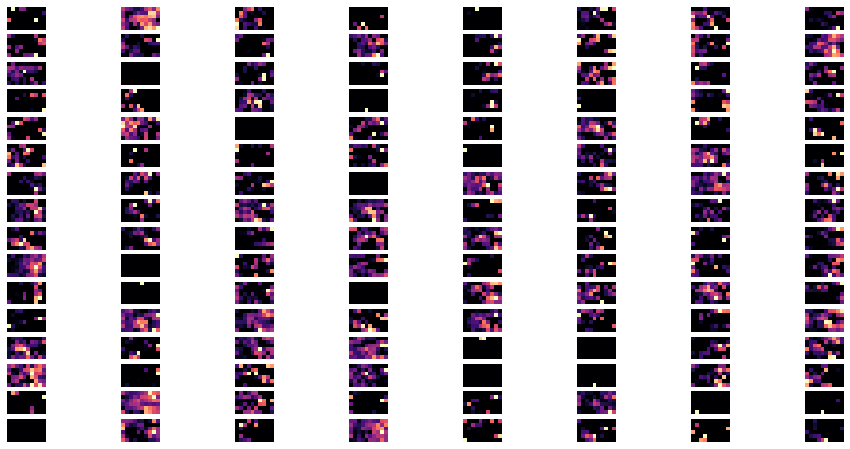

img_slab.shape

(40, 56, 3, 384)

Again, we have the x, y and z of our images, i.e. the images themselves, in the first three dimensions which are stacked in the fourth dimension. For this type of data to work with keras/tensorflow we actually need to adapt, that is swap, some of the dimensions, as these modules/functions expect them in a different way. This part of getting your data ready as input into a given ANN is crucial and can cause one or the other problem. Therefore, always make sure to carefully read the documentation of a class, module or pre-trained model you want to use. They are usually very good and show entail examples of how to get data ready for the ANN.

That being said, here we need basically only need to make the last dimension the first, so that we have the volumes/images stacked in the first dimension and the images themselves within the subsequent three:

data = np.rollaxis(img_slab.get_fdata(), 3, 0)

data.shape

(384, 40, 56, 3)

Specifically, the last dimension, here 3, are considered as channels.

There are some central parameters we can set before building the ANN itself. For example, we know the shape of the input. That is, the dimensions our input layer will receive:

data_shape = tuple(data.shape[1:])

data_shape

(40, 56, 3)

We also want to set the kernel size of our convolutional kernel. As heard before, this can be a tremendously important hyperparamter that can drastically affect the behavior of your ANN. It is thus something you have to carefully think about and even might want to evaluate via cross-validation. Here, we will use a kernel size of (3,3).

kernel_size = (3, 3)

The same holds true for the filters we want our convolutional layers to use:

filters = 32

Given that we want to work with a supervised learning problem and know that there are 2 classes we want our ANN to learn to learn distinguish, we can set the number of classes accordingly:

n_classes = 2

With that, we ready to start building our ANN!

Building an ANN¶

You heard right, it’s finally ANN time! Initially, we have to decide on an architecture, that is the type of ANN we want to build. As we want to test a simple CNN, a feedforward ANN without multiple inputs and/or outputs, we will employ what is called a sequential model in keras/tensorflows within which we define layer by layer. Note: It’s the easiest but also the most restrictive one.

model = Sequential()

2021-09-21 15:41:37.064283: I tensorflow/core/platform/cpu_feature_guard.cc:142] This TensorFlow binary is optimized with oneAPI Deep Neural Network Library (oneDNN) to use the following CPU instructions in performance-critical operations: AVX2 FMA

To enable them in other operations, rebuild TensorFlow with the appropriate compiler flags.

Now that the basic structure is defined, we can start adding layers to our ANN. This is achieved by the following syntax (pseudocode):

model.add(layer_typ(layer_settings, layer_parameters))

Defining the input layer¶

The first step? Obviously defining an input layer, i.e. the layer that receives the external input. We want to build a CNN, so let’s make it a convolutional layer. What do we need for that?

help(Conv2D)

Help on class Conv2D in module tensorflow.python.keras.layers.convolutional:

class Conv2D(Conv)

| Conv2D(*args, **kwargs)

|

| 2D convolution layer (e.g. spatial convolution over images).

|

| This layer creates a convolution kernel that is convolved

| with the layer input to produce a tensor of

| outputs. If `use_bias` is True,

| a bias vector is created and added to the outputs. Finally, if

| `activation` is not `None`, it is applied to the outputs as well.

|

| When using this layer as the first layer in a model,

| provide the keyword argument `input_shape`

| (tuple of integers or `None`, does not include the sample axis),

| e.g. `input_shape=(128, 128, 3)` for 128x128 RGB pictures

| in `data_format="channels_last"`. You can use `None` when

| a dimension has variable size.

|

| Examples:

|

| >>> # The inputs are 28x28 RGB images with `channels_last` and the batch

| >>> # size is 4.

| >>> input_shape = (4, 28, 28, 3)

| >>> x = tf.random.normal(input_shape)

| >>> y = tf.keras.layers.Conv2D(

| ... 2, 3, activation='relu', input_shape=input_shape[1:])(x)

| >>> print(y.shape)

| (4, 26, 26, 2)

|

| >>> # With `dilation_rate` as 2.

| >>> input_shape = (4, 28, 28, 3)

| >>> x = tf.random.normal(input_shape)

| >>> y = tf.keras.layers.Conv2D(

| ... 2, 3, activation='relu', dilation_rate=2, input_shape=input_shape[1:])(x)

| >>> print(y.shape)

| (4, 24, 24, 2)

|

| >>> # With `padding` as "same".

| >>> input_shape = (4, 28, 28, 3)

| >>> x = tf.random.normal(input_shape)

| >>> y = tf.keras.layers.Conv2D(

| ... 2, 3, activation='relu', padding="same", input_shape=input_shape[1:])(x)

| >>> print(y.shape)

| (4, 28, 28, 2)

|

| >>> # With extended batch shape [4, 7]:

| >>> input_shape = (4, 7, 28, 28, 3)

| >>> x = tf.random.normal(input_shape)

| >>> y = tf.keras.layers.Conv2D(

| ... 2, 3, activation='relu', input_shape=input_shape[2:])(x)

| >>> print(y.shape)

| (4, 7, 26, 26, 2)

|

|

| Args:

| filters: Integer, the dimensionality of the output space (i.e. the number of

| output filters in the convolution).

| kernel_size: An integer or tuple/list of 2 integers, specifying the height

| and width of the 2D convolution window. Can be a single integer to specify

| the same value for all spatial dimensions.

| strides: An integer or tuple/list of 2 integers, specifying the strides of

| the convolution along the height and width. Can be a single integer to

| specify the same value for all spatial dimensions. Specifying any stride

| value != 1 is incompatible with specifying any `dilation_rate` value != 1.

| padding: one of `"valid"` or `"same"` (case-insensitive).

| `"valid"` means no padding. `"same"` results in padding with zeros evenly

| to the left/right or up/down of the input such that output has the same

| height/width dimension as the input.

| data_format: A string, one of `channels_last` (default) or `channels_first`.

| The ordering of the dimensions in the inputs. `channels_last` corresponds

| to inputs with shape `(batch_size, height, width, channels)` while

| `channels_first` corresponds to inputs with shape `(batch_size, channels,

| height, width)`. It defaults to the `image_data_format` value found in

| your Keras config file at `~/.keras/keras.json`. If you never set it, then

| it will be `channels_last`.

| dilation_rate: an integer or tuple/list of 2 integers, specifying the

| dilation rate to use for dilated convolution. Can be a single integer to

| specify the same value for all spatial dimensions. Currently, specifying

| any `dilation_rate` value != 1 is incompatible with specifying any stride

| value != 1.

| groups: A positive integer specifying the number of groups in which the

| input is split along the channel axis. Each group is convolved separately

| with `filters / groups` filters. The output is the concatenation of all

| the `groups` results along the channel axis. Input channels and `filters`

| must both be divisible by `groups`.

| activation: Activation function to use. If you don't specify anything, no

| activation is applied (see `keras.activations`).

| use_bias: Boolean, whether the layer uses a bias vector.

| kernel_initializer: Initializer for the `kernel` weights matrix (see

| `keras.initializers`). Defaults to 'glorot_uniform'.

| bias_initializer: Initializer for the bias vector (see

| `keras.initializers`). Defaults to 'zeros'.

| kernel_regularizer: Regularizer function applied to the `kernel` weights

| matrix (see `keras.regularizers`).

| bias_regularizer: Regularizer function applied to the bias vector (see

| `keras.regularizers`).

| activity_regularizer: Regularizer function applied to the output of the

| layer (its "activation") (see `keras.regularizers`).

| kernel_constraint: Constraint function applied to the kernel matrix (see

| `keras.constraints`).

| bias_constraint: Constraint function applied to the bias vector (see

| `keras.constraints`).

| Input shape:

| 4+D tensor with shape: `batch_shape + (channels, rows, cols)` if

| `data_format='channels_first'`

| or 4+D tensor with shape: `batch_shape + (rows, cols, channels)` if

| `data_format='channels_last'`.

| Output shape:

| 4+D tensor with shape: `batch_shape + (filters, new_rows, new_cols)` if

| `data_format='channels_first'` or 4+D tensor with shape: `batch_shape +

| (new_rows, new_cols, filters)` if `data_format='channels_last'`. `rows`

| and `cols` values might have changed due to padding.

|

| Returns:

| A tensor of rank 4+ representing

| `activation(conv2d(inputs, kernel) + bias)`.

|

| Raises:

| ValueError: if `padding` is `"causal"`.

| ValueError: when both `strides > 1` and `dilation_rate > 1`.

|

| Method resolution order:

| Conv2D

| Conv

| tensorflow.python.keras.engine.base_layer.Layer

| tensorflow.python.module.module.Module

| tensorflow.python.training.tracking.tracking.AutoTrackable

| tensorflow.python.training.tracking.base.Trackable

| tensorflow.python.keras.utils.version_utils.LayerVersionSelector

| builtins.object

|

| Methods defined here:

|

| __init__(self, filters, kernel_size, strides=(1, 1), padding='valid', data_format=None, dilation_rate=(1, 1), groups=1, activation=None, use_bias=True, kernel_initializer='glorot_uniform', bias_initializer='zeros', kernel_regularizer=None, bias_regularizer=None, activity_regularizer=None, kernel_constraint=None, bias_constraint=None, **kwargs)

|

| ----------------------------------------------------------------------

| Methods inherited from Conv:

|

| build(self, input_shape)

| Creates the variables of the layer (optional, for subclass implementers).

|

| This is a method that implementers of subclasses of `Layer` or `Model`

| can override if they need a state-creation step in-between

| layer instantiation and layer call.

|

| This is typically used to create the weights of `Layer` subclasses.

|

| Args:

| input_shape: Instance of `TensorShape`, or list of instances of

| `TensorShape` if the layer expects a list of inputs

| (one instance per input).

|

| call(self, inputs)

| This is where the layer's logic lives.

|

| Note here that `call()` method in `tf.keras` is little bit different

| from `keras` API. In `keras` API, you can pass support masking for

| layers as additional arguments. Whereas `tf.keras` has `compute_mask()`

| method to support masking.

|

| Args:

| inputs: Input tensor, or dict/list/tuple of input tensors.

| The first positional `inputs` argument is subject to special rules:

| - `inputs` must be explicitly passed. A layer cannot have zero

| arguments, and `inputs` cannot be provided via the default value

| of a keyword argument.

| - NumPy array or Python scalar values in `inputs` get cast as tensors.

| - Keras mask metadata is only collected from `inputs`.

| - Layers are built (`build(input_shape)` method)

| using shape info from `inputs` only.

| - `input_spec` compatibility is only checked against `inputs`.

| - Mixed precision input casting is only applied to `inputs`.

| If a layer has tensor arguments in `*args` or `**kwargs`, their

| casting behavior in mixed precision should be handled manually.

| - The SavedModel input specification is generated using `inputs` only.

| - Integration with various ecosystem packages like TFMOT, TFLite,

| TF.js, etc is only supported for `inputs` and not for tensors in

| positional and keyword arguments.

| *args: Additional positional arguments. May contain tensors, although

| this is not recommended, for the reasons above.

| **kwargs: Additional keyword arguments. May contain tensors, although

| this is not recommended, for the reasons above.

| The following optional keyword arguments are reserved:

| - `training`: Boolean scalar tensor of Python boolean indicating

| whether the `call` is meant for training or inference.

| - `mask`: Boolean input mask. If the layer's `call()` method takes a

| `mask` argument, its default value will be set to the mask generated

| for `inputs` by the previous layer (if `input` did come from a layer

| that generated a corresponding mask, i.e. if it came from a Keras

| layer with masking support).

|

| Returns:

| A tensor or list/tuple of tensors.

|

| compute_output_shape(self, input_shape)

| Computes the output shape of the layer.

|

| If the layer has not been built, this method will call `build` on the

| layer. This assumes that the layer will later be used with inputs that

| match the input shape provided here.

|

| Args:

| input_shape: Shape tuple (tuple of integers)

| or list of shape tuples (one per output tensor of the layer).

| Shape tuples can include None for free dimensions,

| instead of an integer.

|

| Returns:

| An input shape tuple.

|

| get_config(self)

| Returns the config of the layer.

|

| A layer config is a Python dictionary (serializable)

| containing the configuration of a layer.

| The same layer can be reinstantiated later

| (without its trained weights) from this configuration.

|

| The config of a layer does not include connectivity

| information, nor the layer class name. These are handled

| by `Network` (one layer of abstraction above).

|

| Note that `get_config()` does not guarantee to return a fresh copy of dict

| every time it is called. The callers should make a copy of the returned dict

| if they want to modify it.

|

| Returns:

| Python dictionary.

|

| ----------------------------------------------------------------------

| Methods inherited from tensorflow.python.keras.engine.base_layer.Layer:

|

| __call__(self, *args, **kwargs)

| Wraps `call`, applying pre- and post-processing steps.

|

| Args:

| *args: Positional arguments to be passed to `self.call`.

| **kwargs: Keyword arguments to be passed to `self.call`.

|

| Returns:

| Output tensor(s).

|

| Note:

| - The following optional keyword arguments are reserved for specific uses:

| * `training`: Boolean scalar tensor of Python boolean indicating

| whether the `call` is meant for training or inference.

| * `mask`: Boolean input mask.

| - If the layer's `call` method takes a `mask` argument (as some Keras

| layers do), its default value will be set to the mask generated

| for `inputs` by the previous layer (if `input` did come from

| a layer that generated a corresponding mask, i.e. if it came from

| a Keras layer with masking support.

| - If the layer is not built, the method will call `build`.

|

| Raises:

| ValueError: if the layer's `call` method returns None (an invalid value).

| RuntimeError: if `super().__init__()` was not called in the constructor.

|

| __delattr__(self, name)

| Implement delattr(self, name).

|

| __getstate__(self)

|

| __setattr__(self, name, value)

| Support self.foo = trackable syntax.

|

| __setstate__(self, state)

|

| add_loss(self, losses, **kwargs)

| Add loss tensor(s), potentially dependent on layer inputs.

|

| Some losses (for instance, activity regularization losses) may be dependent

| on the inputs passed when calling a layer. Hence, when reusing the same

| layer on different inputs `a` and `b`, some entries in `layer.losses` may

| be dependent on `a` and some on `b`. This method automatically keeps track

| of dependencies.

|

| This method can be used inside a subclassed layer or model's `call`

| function, in which case `losses` should be a Tensor or list of Tensors.

|

| Example:

|

| ```python

| class MyLayer(tf.keras.layers.Layer):

| def call(self, inputs):

| self.add_loss(tf.abs(tf.reduce_mean(inputs)))

| return inputs

| ```

|

| This method can also be called directly on a Functional Model during

| construction. In this case, any loss Tensors passed to this Model must

| be symbolic and be able to be traced back to the model's `Input`s. These

| losses become part of the model's topology and are tracked in `get_config`.

|

| Example:

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| # Activity regularization.

| model.add_loss(tf.abs(tf.reduce_mean(x)))

| ```

|

| If this is not the case for your loss (if, for example, your loss references

| a `Variable` of one of the model's layers), you can wrap your loss in a

| zero-argument lambda. These losses are not tracked as part of the model's

| topology since they can't be serialized.

|

| Example:

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| d = tf.keras.layers.Dense(10)

| x = d(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| # Weight regularization.

| model.add_loss(lambda: tf.reduce_mean(d.kernel))

| ```

|

| Args:

| losses: Loss tensor, or list/tuple of tensors. Rather than tensors, losses

| may also be zero-argument callables which create a loss tensor.

| **kwargs: Additional keyword arguments for backward compatibility.

| Accepted values:

| inputs - Deprecated, will be automatically inferred.

|

| add_metric(self, value, name=None, **kwargs)

| Adds metric tensor to the layer.

|

| This method can be used inside the `call()` method of a subclassed layer

| or model.

|

| ```python

| class MyMetricLayer(tf.keras.layers.Layer):

| def __init__(self):

| super(MyMetricLayer, self).__init__(name='my_metric_layer')

| self.mean = tf.keras.metrics.Mean(name='metric_1')

|

| def call(self, inputs):

| self.add_metric(self.mean(inputs))

| self.add_metric(tf.reduce_sum(inputs), name='metric_2')

| return inputs

| ```

|

| This method can also be called directly on a Functional Model during

| construction. In this case, any tensor passed to this Model must

| be symbolic and be able to be traced back to the model's `Input`s. These

| metrics become part of the model's topology and are tracked when you

| save the model via `save()`.

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| model.add_metric(math_ops.reduce_sum(x), name='metric_1')

| ```

|

| Note: Calling `add_metric()` with the result of a metric object on a

| Functional Model, as shown in the example below, is not supported. This is

| because we cannot trace the metric result tensor back to the model's inputs.

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| model.add_metric(tf.keras.metrics.Mean()(x), name='metric_1')

| ```

|

| Args:

| value: Metric tensor.

| name: String metric name.

| **kwargs: Additional keyword arguments for backward compatibility.

| Accepted values:

| `aggregation` - When the `value` tensor provided is not the result of

| calling a `keras.Metric` instance, it will be aggregated by default

| using a `keras.Metric.Mean`.

|

| add_update(self, updates, inputs=None)

| Add update op(s), potentially dependent on layer inputs.

|

| Weight updates (for instance, the updates of the moving mean and variance

| in a BatchNormalization layer) may be dependent on the inputs passed

| when calling a layer. Hence, when reusing the same layer on

| different inputs `a` and `b`, some entries in `layer.updates` may be

| dependent on `a` and some on `b`. This method automatically keeps track

| of dependencies.

|

| This call is ignored when eager execution is enabled (in that case, variable

| updates are run on the fly and thus do not need to be tracked for later

| execution).

|

| Args:

| updates: Update op, or list/tuple of update ops, or zero-arg callable

| that returns an update op. A zero-arg callable should be passed in

| order to disable running the updates by setting `trainable=False`

| on this Layer, when executing in Eager mode.

| inputs: Deprecated, will be automatically inferred.

|

| add_variable(self, *args, **kwargs)

| Deprecated, do NOT use! Alias for `add_weight`.

|

| add_weight(self, name=None, shape=None, dtype=None, initializer=None, regularizer=None, trainable=None, constraint=None, use_resource=None, synchronization=<VariableSynchronization.AUTO: 0>, aggregation=<VariableAggregation.NONE: 0>, **kwargs)

| Adds a new variable to the layer.

|

| Args:

| name: Variable name.

| shape: Variable shape. Defaults to scalar if unspecified.

| dtype: The type of the variable. Defaults to `self.dtype`.

| initializer: Initializer instance (callable).

| regularizer: Regularizer instance (callable).

| trainable: Boolean, whether the variable should be part of the layer's

| "trainable_variables" (e.g. variables, biases)

| or "non_trainable_variables" (e.g. BatchNorm mean and variance).

| Note that `trainable` cannot be `True` if `synchronization`

| is set to `ON_READ`.

| constraint: Constraint instance (callable).

| use_resource: Whether to use `ResourceVariable`.

| synchronization: Indicates when a distributed a variable will be

| aggregated. Accepted values are constants defined in the class

| `tf.VariableSynchronization`. By default the synchronization is set to

| `AUTO` and the current `DistributionStrategy` chooses

| when to synchronize. If `synchronization` is set to `ON_READ`,

| `trainable` must not be set to `True`.

| aggregation: Indicates how a distributed variable will be aggregated.

| Accepted values are constants defined in the class

| `tf.VariableAggregation`.

| **kwargs: Additional keyword arguments. Accepted values are `getter`,

| `collections`, `experimental_autocast` and `caching_device`.

|

| Returns:

| The variable created.

|

| Raises:

| ValueError: When giving unsupported dtype and no initializer or when

| trainable has been set to True with synchronization set as `ON_READ`.

|

| apply(self, inputs, *args, **kwargs)

| Deprecated, do NOT use!

|

| This is an alias of `self.__call__`.

|

| Args:

| inputs: Input tensor(s).

| *args: additional positional arguments to be passed to `self.call`.

| **kwargs: additional keyword arguments to be passed to `self.call`.

|

| Returns:

| Output tensor(s).

|

| compute_mask(self, inputs, mask=None)

| Computes an output mask tensor.

|

| Args:

| inputs: Tensor or list of tensors.

| mask: Tensor or list of tensors.

|

| Returns:

| None or a tensor (or list of tensors,

| one per output tensor of the layer).

|

| compute_output_signature(self, input_signature)

| Compute the output tensor signature of the layer based on the inputs.

|

| Unlike a TensorShape object, a TensorSpec object contains both shape

| and dtype information for a tensor. This method allows layers to provide

| output dtype information if it is different from the input dtype.

| For any layer that doesn't implement this function,

| the framework will fall back to use `compute_output_shape`, and will

| assume that the output dtype matches the input dtype.

|

| Args:

| input_signature: Single TensorSpec or nested structure of TensorSpec

| objects, describing a candidate input for the layer.

|

| Returns:

| Single TensorSpec or nested structure of TensorSpec objects, describing

| how the layer would transform the provided input.

|

| Raises:

| TypeError: If input_signature contains a non-TensorSpec object.

|

| count_params(self)

| Count the total number of scalars composing the weights.

|

| Returns:

| An integer count.

|

| Raises:

| ValueError: if the layer isn't yet built

| (in which case its weights aren't yet defined).

|

| finalize_state(self)

| Finalizes the layers state after updating layer weights.

|

| This function can be subclassed in a layer and will be called after updating

| a layer weights. It can be overridden to finalize any additional layer state

| after a weight update.

|

| get_input_at(self, node_index)

| Retrieves the input tensor(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first input node of the layer.

|

| Returns:

| A tensor (or list of tensors if the layer has multiple inputs).

|

| Raises:

| RuntimeError: If called in Eager mode.

|

| get_input_mask_at(self, node_index)

| Retrieves the input mask tensor(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first time the layer was called.

|

| Returns:

| A mask tensor

| (or list of tensors if the layer has multiple inputs).

|

| get_input_shape_at(self, node_index)

| Retrieves the input shape(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first time the layer was called.

|

| Returns:

| A shape tuple

| (or list of shape tuples if the layer has multiple inputs).

|

| Raises:

| RuntimeError: If called in Eager mode.

|

| get_losses_for(self, inputs)

| Deprecated, do NOT use!

|

| Retrieves losses relevant to a specific set of inputs.

|

| Args:

| inputs: Input tensor or list/tuple of input tensors.

|

| Returns:

| List of loss tensors of the layer that depend on `inputs`.

|

| get_output_at(self, node_index)

| Retrieves the output tensor(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first output node of the layer.

|

| Returns:

| A tensor (or list of tensors if the layer has multiple outputs).

|

| Raises:

| RuntimeError: If called in Eager mode.

|

| get_output_mask_at(self, node_index)

| Retrieves the output mask tensor(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first time the layer was called.

|

| Returns:

| A mask tensor

| (or list of tensors if the layer has multiple outputs).

|

| get_output_shape_at(self, node_index)

| Retrieves the output shape(s) of a layer at a given node.

|

| Args:

| node_index: Integer, index of the node

| from which to retrieve the attribute.

| E.g. `node_index=0` will correspond to the

| first time the layer was called.

|

| Returns:

| A shape tuple

| (or list of shape tuples if the layer has multiple outputs).

|

| Raises:

| RuntimeError: If called in Eager mode.

|

| get_updates_for(self, inputs)

| Deprecated, do NOT use!

|

| Retrieves updates relevant to a specific set of inputs.

|

| Args:

| inputs: Input tensor or list/tuple of input tensors.

|

| Returns:

| List of update ops of the layer that depend on `inputs`.

|

| get_weights(self)

| Returns the current weights of the layer, as NumPy arrays.

|

| The weights of a layer represent the state of the layer. This function

| returns both trainable and non-trainable weight values associated with this

| layer as a list of NumPy arrays, which can in turn be used to load state

| into similarly parameterized layers.

|

| For example, a `Dense` layer returns a list of two values: the kernel matrix

| and the bias vector. These can be used to set the weights of another

| `Dense` layer:

|

| >>> layer_a = tf.keras.layers.Dense(1,

| ... kernel_initializer=tf.constant_initializer(1.))

| >>> a_out = layer_a(tf.convert_to_tensor([[1., 2., 3.]]))

| >>> layer_a.get_weights()

| [array([[1.],

| [1.],

| [1.]], dtype=float32), array([0.], dtype=float32)]

| >>> layer_b = tf.keras.layers.Dense(1,

| ... kernel_initializer=tf.constant_initializer(2.))

| >>> b_out = layer_b(tf.convert_to_tensor([[10., 20., 30.]]))

| >>> layer_b.get_weights()

| [array([[2.],

| [2.],

| [2.]], dtype=float32), array([0.], dtype=float32)]

| >>> layer_b.set_weights(layer_a.get_weights())

| >>> layer_b.get_weights()

| [array([[1.],

| [1.],

| [1.]], dtype=float32), array([0.], dtype=float32)]

|

| Returns:

| Weights values as a list of NumPy arrays.

|

| set_weights(self, weights)

| Sets the weights of the layer, from NumPy arrays.

|

| The weights of a layer represent the state of the layer. This function

| sets the weight values from numpy arrays. The weight values should be

| passed in the order they are created by the layer. Note that the layer's

| weights must be instantiated before calling this function, by calling

| the layer.

|

| For example, a `Dense` layer returns a list of two values: the kernel matrix

| and the bias vector. These can be used to set the weights of another

| `Dense` layer:

|

| >>> layer_a = tf.keras.layers.Dense(1,

| ... kernel_initializer=tf.constant_initializer(1.))

| >>> a_out = layer_a(tf.convert_to_tensor([[1., 2., 3.]]))

| >>> layer_a.get_weights()

| [array([[1.],

| [1.],

| [1.]], dtype=float32), array([0.], dtype=float32)]

| >>> layer_b = tf.keras.layers.Dense(1,

| ... kernel_initializer=tf.constant_initializer(2.))

| >>> b_out = layer_b(tf.convert_to_tensor([[10., 20., 30.]]))

| >>> layer_b.get_weights()

| [array([[2.],

| [2.],

| [2.]], dtype=float32), array([0.], dtype=float32)]

| >>> layer_b.set_weights(layer_a.get_weights())

| >>> layer_b.get_weights()

| [array([[1.],

| [1.],

| [1.]], dtype=float32), array([0.], dtype=float32)]

|

| Args:

| weights: a list of NumPy arrays. The number

| of arrays and their shape must match

| number of the dimensions of the weights

| of the layer (i.e. it should match the

| output of `get_weights`).

|

| Raises:

| ValueError: If the provided weights list does not match the

| layer's specifications.

|

| ----------------------------------------------------------------------

| Class methods inherited from tensorflow.python.keras.engine.base_layer.Layer:

|

| from_config(config) from builtins.type

| Creates a layer from its config.

|

| This method is the reverse of `get_config`,

| capable of instantiating the same layer from the config

| dictionary. It does not handle layer connectivity

| (handled by Network), nor weights (handled by `set_weights`).

|

| Args:

| config: A Python dictionary, typically the

| output of get_config.

|

| Returns:

| A layer instance.

|

| ----------------------------------------------------------------------

| Readonly properties inherited from tensorflow.python.keras.engine.base_layer.Layer:

|

| compute_dtype

| The dtype of the layer's computations.

|

| This is equivalent to `Layer.dtype_policy.compute_dtype`. Unless

| mixed precision is used, this is the same as `Layer.dtype`, the dtype of

| the weights.

|

| Layers automatically cast their inputs to the compute dtype, which causes

| computations and the output to be in the compute dtype as well. This is done

| by the base Layer class in `Layer.__call__`, so you do not have to insert

| these casts if implementing your own layer.

|

| Layers often perform certain internal computations in higher precision when

| `compute_dtype` is float16 or bfloat16 for numeric stability. The output

| will still typically be float16 or bfloat16 in such cases.

|

| Returns:

| The layer's compute dtype.

|

| dtype

| The dtype of the layer weights.

|

| This is equivalent to `Layer.dtype_policy.variable_dtype`. Unless

| mixed precision is used, this is the same as `Layer.compute_dtype`, the

| dtype of the layer's computations.

|

| dtype_policy

| The dtype policy associated with this layer.

|

| This is an instance of a `tf.keras.mixed_precision.Policy`.

|

| dynamic

| Whether the layer is dynamic (eager-only); set in the constructor.

|

| inbound_nodes

| Deprecated, do NOT use! Only for compatibility with external Keras.

|

| input

| Retrieves the input tensor(s) of a layer.

|

| Only applicable if the layer has exactly one input,

| i.e. if it is connected to one incoming layer.

|

| Returns:

| Input tensor or list of input tensors.

|

| Raises:

| RuntimeError: If called in Eager mode.

| AttributeError: If no inbound nodes are found.

|

| input_mask

| Retrieves the input mask tensor(s) of a layer.

|

| Only applicable if the layer has exactly one inbound node,

| i.e. if it is connected to one incoming layer.

|

| Returns:

| Input mask tensor (potentially None) or list of input

| mask tensors.

|

| Raises:

| AttributeError: if the layer is connected to

| more than one incoming layers.

|

| input_shape

| Retrieves the input shape(s) of a layer.

|

| Only applicable if the layer has exactly one input,

| i.e. if it is connected to one incoming layer, or if all inputs

| have the same shape.

|

| Returns:

| Input shape, as an integer shape tuple

| (or list of shape tuples, one tuple per input tensor).

|

| Raises:

| AttributeError: if the layer has no defined input_shape.

| RuntimeError: if called in Eager mode.

|

| losses

| List of losses added using the `add_loss()` API.

|

| Variable regularization tensors are created when this property is accessed,

| so it is eager safe: accessing `losses` under a `tf.GradientTape` will

| propagate gradients back to the corresponding variables.

|

| Examples:

|

| >>> class MyLayer(tf.keras.layers.Layer):

| ... def call(self, inputs):

| ... self.add_loss(tf.abs(tf.reduce_mean(inputs)))

| ... return inputs

| >>> l = MyLayer()

| >>> l(np.ones((10, 1)))

| >>> l.losses

| [1.0]

|

| >>> inputs = tf.keras.Input(shape=(10,))

| >>> x = tf.keras.layers.Dense(10)(inputs)

| >>> outputs = tf.keras.layers.Dense(1)(x)

| >>> model = tf.keras.Model(inputs, outputs)

| >>> # Activity regularization.

| >>> len(model.losses)

| 0

| >>> model.add_loss(tf.abs(tf.reduce_mean(x)))

| >>> len(model.losses)

| 1

|

| >>> inputs = tf.keras.Input(shape=(10,))

| >>> d = tf.keras.layers.Dense(10, kernel_initializer='ones')

| >>> x = d(inputs)

| >>> outputs = tf.keras.layers.Dense(1)(x)

| >>> model = tf.keras.Model(inputs, outputs)

| >>> # Weight regularization.

| >>> model.add_loss(lambda: tf.reduce_mean(d.kernel))

| >>> model.losses

| [<tf.Tensor: shape=(), dtype=float32, numpy=1.0>]

|

| Returns:

| A list of tensors.

|

| metrics

| List of metrics added using the `add_metric()` API.

|

| Example:

|

| >>> input = tf.keras.layers.Input(shape=(3,))

| >>> d = tf.keras.layers.Dense(2)

| >>> output = d(input)

| >>> d.add_metric(tf.reduce_max(output), name='max')

| >>> d.add_metric(tf.reduce_min(output), name='min')

| >>> [m.name for m in d.metrics]

| ['max', 'min']

|

| Returns:

| A list of `Metric` objects.

|

| name

| Name of the layer (string), set in the constructor.

|

| non_trainable_variables

| Sequence of non-trainable variables owned by this module and its submodules.

|

| Note: this method uses reflection to find variables on the current instance

| and submodules. For performance reasons you may wish to cache the result

| of calling this method if you don't expect the return value to change.

|

| Returns:

| A sequence of variables for the current module (sorted by attribute

| name) followed by variables from all submodules recursively (breadth

| first).

|

| non_trainable_weights

| List of all non-trainable weights tracked by this layer.

|

| Non-trainable weights are *not* updated during training. They are expected

| to be updated manually in `call()`.

|

| Returns:

| A list of non-trainable variables.

|

| outbound_nodes

| Deprecated, do NOT use! Only for compatibility with external Keras.

|

| output

| Retrieves the output tensor(s) of a layer.

|

| Only applicable if the layer has exactly one output,

| i.e. if it is connected to one incoming layer.

|

| Returns:

| Output tensor or list of output tensors.

|

| Raises:

| AttributeError: if the layer is connected to more than one incoming

| layers.

| RuntimeError: if called in Eager mode.

|

| output_mask

| Retrieves the output mask tensor(s) of a layer.

|

| Only applicable if the layer has exactly one inbound node,

| i.e. if it is connected to one incoming layer.

|

| Returns:

| Output mask tensor (potentially None) or list of output

| mask tensors.

|

| Raises:

| AttributeError: if the layer is connected to

| more than one incoming layers.

|

| output_shape

| Retrieves the output shape(s) of a layer.

|

| Only applicable if the layer has one output,

| or if all outputs have the same shape.

|

| Returns:

| Output shape, as an integer shape tuple

| (or list of shape tuples, one tuple per output tensor).

|

| Raises:

| AttributeError: if the layer has no defined output shape.

| RuntimeError: if called in Eager mode.

|

| trainable_variables

| Sequence of trainable variables owned by this module and its submodules.

|

| Note: this method uses reflection to find variables on the current instance

| and submodules. For performance reasons you may wish to cache the result

| of calling this method if you don't expect the return value to change.

|

| Returns:

| A sequence of variables for the current module (sorted by attribute

| name) followed by variables from all submodules recursively (breadth

| first).

|

| trainable_weights

| List of all trainable weights tracked by this layer.

|

| Trainable weights are updated via gradient descent during training.

|

| Returns:

| A list of trainable variables.

|

| updates

|

| variable_dtype

| Alias of `Layer.dtype`, the dtype of the weights.

|

| variables

| Returns the list of all layer variables/weights.

|

| Alias of `self.weights`.

|

| Note: This will not track the weights of nested `tf.Modules` that are not

| themselves Keras layers.

|

| Returns:

| A list of variables.

|

| weights

| Returns the list of all layer variables/weights.

|

| Returns:

| A list of variables.

|

| ----------------------------------------------------------------------

| Data descriptors inherited from tensorflow.python.keras.engine.base_layer.Layer:

|

| activity_regularizer

| Optional regularizer function for the output of this layer.

|

| input_spec

| `InputSpec` instance(s) describing the input format for this layer.

|

| When you create a layer subclass, you can set `self.input_spec` to enable

| the layer to run input compatibility checks when it is called.

| Consider a `Conv2D` layer: it can only be called on a single input tensor

| of rank 4. As such, you can set, in `__init__()`:

|

| ```python

| self.input_spec = tf.keras.layers.InputSpec(ndim=4)

| ```

|

| Now, if you try to call the layer on an input that isn't rank 4

| (for instance, an input of shape `(2,)`, it will raise a nicely-formatted

| error:

|

| ```

| ValueError: Input 0 of layer conv2d is incompatible with the layer:

| expected ndim=4, found ndim=1. Full shape received: [2]

| ```

|

| Input checks that can be specified via `input_spec` include:

| - Structure (e.g. a single input, a list of 2 inputs, etc)

| - Shape

| - Rank (ndim)

| - Dtype

|

| For more information, see `tf.keras.layers.InputSpec`.

|

| Returns:

| A `tf.keras.layers.InputSpec` instance, or nested structure thereof.

|

| stateful

|

| supports_masking

| Whether this layer supports computing a mask using `compute_mask`.

|

| trainable

|

| ----------------------------------------------------------------------

| Class methods inherited from tensorflow.python.module.module.Module:

|

| with_name_scope(method) from builtins.type

| Decorator to automatically enter the module name scope.

|

| >>> class MyModule(tf.Module):

| ... @tf.Module.with_name_scope

| ... def __call__(self, x):

| ... if not hasattr(self, 'w'):

| ... self.w = tf.Variable(tf.random.normal([x.shape[1], 3]))

| ... return tf.matmul(x, self.w)

|

| Using the above module would produce `tf.Variable`s and `tf.Tensor`s whose

| names included the module name:

|

| >>> mod = MyModule()

| >>> mod(tf.ones([1, 2]))

| <tf.Tensor: shape=(1, 3), dtype=float32, numpy=..., dtype=float32)>

| >>> mod.w

| <tf.Variable 'my_module/Variable:0' shape=(2, 3) dtype=float32,

| numpy=..., dtype=float32)>

|

| Args:

| method: The method to wrap.

|

| Returns:

| The original method wrapped such that it enters the module's name scope.

|

| ----------------------------------------------------------------------

| Readonly properties inherited from tensorflow.python.module.module.Module:

|

| name_scope

| Returns a `tf.name_scope` instance for this class.

|

| submodules

| Sequence of all sub-modules.

|

| Submodules are modules which are properties of this module, or found as

| properties of modules which are properties of this module (and so on).

|

| >>> a = tf.Module()

| >>> b = tf.Module()

| >>> c = tf.Module()

| >>> a.b = b

| >>> b.c = c

| >>> list(a.submodules) == [b, c]

| True

| >>> list(b.submodules) == [c]

| True

| >>> list(c.submodules) == []

| True

|

| Returns:

| A sequence of all submodules.

|

| ----------------------------------------------------------------------

| Data descriptors inherited from tensorflow.python.training.tracking.base.Trackable:

|

| __dict__

| dictionary for instance variables (if defined)

|

| __weakref__

| list of weak references to the object (if defined)

|

| ----------------------------------------------------------------------

| Static methods inherited from tensorflow.python.keras.utils.version_utils.LayerVersionSelector:

|

| __new__(cls, *args, **kwargs)

| Create and return a new object. See help(type) for accurate signature.

Ok, there are quite a few parameters to set. However, we are going to keep it light and breezy, setting a few of the things we’ve talked about: the number of filter, the kernel size, the activation function and the shape of the input which in our case is the shape of our images.

model.add(Conv2D(filters, kernel_size, activation='relu', input_shape=data_shape))

Batch normalization layer¶

As briefly addressed before, batch normalization can be very helpful: speed up the training, addresses internal covariate shift (highly debated), smoothes the loss function, etc. . It does so via re-centering and re-scaling the inputs of a given layer. Thus, we are going to include batch normalization layers also in our ANN:

model.add(BatchNormalization())

As you can see, we added the batch normalization layer right after the convolutional layer so that the latter’s output will be re-centered and re-scaled.

Pooling layer¶

Another important part of CNN architectures is the pooling layer, i.e. the layer that reduces the spatial size of the representation computed in the previous layer, i.e. convolutional layer. In turn, we can reduce the amount of parameters and thus computation our ANN needs to perform. Out of the two pooling options, max pooling and average pooling, CNNs typically utilize max pooling because it helps to detect certain features more easily and as the representation becomes more abstract also helps to reduce overfitting. Sounds like a good idea, eh?

model.add(MaxPooling2D())

Getting more fine-grained¶

In order to get our ANN and the features it works on more fine-grained, we will double the filter size for the next step, i.e. layer(s).

filters *= 2

Along this line of thought, we will repeat the succession of convolutional, batch normalization, pooling and filter size increase two more times:

model.add(Conv2D(filters, kernel_size, activation='relu'))

model.add(BatchNormalization())

model.add(MaxPooling2D())

filters *= 2

model.add(Conv2D(filters, kernel_size, activation='relu'))

model.add(BatchNormalization())

model.add(MaxPooling2D())

filters *= 2

Please note: we removed the input_shape parameter from the Conv2D layers as they are not input layers.

It’s getting dense¶

Now that we’ve sent our input through several layers aimed at obtaining representations, it might be worth a try to think about we can achieve our supervised learning goal. Given that we want to have a binary outcome, i.e. eyes open or eyes closed, we want to classify. We can achieve this via dense or fully connected layers (think about MLPs again). However, for this to work, we need to add a flatten layer before that. The reason: even though we convoluted and pooled our input quite a bit, it’s still multidimensional and we need it linear to pass it through a dense/fully connected layer.

model.add(Flatten())

Another thing we need to remember is regularization, that is we need to address overfitting. A brief recap: given that our ANN will have a large number of parameters together with the universal function approximation theorem, there’s for example the possibility that our ANN will just “memorize” the dataset without capturing the information we want to obtain, thus failing to generalize to new data. And why that’s cool in theory (the memorizing part, not the failed generalization part), we obviously want to avoid that. Therefore, we need to apply regularization via imposing constraints on the ANN’s parameters or adapting the cost function. One way to go would be the application of dropout layers that randomly and temporally set nodes in our layers to 0, i.e. deleting them during the training.

model.add(Dropout(0.5))

The parameter we added here, 0.5, specifies the dropout rate or in other words the fraction of the input units, i.e. nodes, to drop. This is a commonly applied value, but does not mean it should also be the default!

Time to go dense and start with our first respective layer. As with the other layer types, there a bunch of parameters we can define:

help(Dense)

Help on class Dense in module tensorflow.python.keras.layers.core:

class Dense(tensorflow.python.keras.engine.base_layer.Layer)

| Dense(*args, **kwargs)

|

| Just your regular densely-connected NN layer.

|

| `Dense` implements the operation:

| `output = activation(dot(input, kernel) + bias)`

| where `activation` is the element-wise activation function

| passed as the `activation` argument, `kernel` is a weights matrix

| created by the layer, and `bias` is a bias vector created by the layer

| (only applicable if `use_bias` is `True`). These are all attributes of

| `Dense`.

|

| Note: If the input to the layer has a rank greater than 2, then `Dense`

| computes the dot product between the `inputs` and the `kernel` along the

| last axis of the `inputs` and axis 0 of the `kernel` (using `tf.tensordot`).

| For example, if input has dimensions `(batch_size, d0, d1)`,

| then we create a `kernel` with shape `(d1, units)`, and the `kernel` operates

| along axis 2 of the `input`, on every sub-tensor of shape `(1, 1, d1)`

| (there are `batch_size * d0` such sub-tensors).

| The output in this case will have shape `(batch_size, d0, units)`.

|

| Besides, layer attributes cannot be modified after the layer has been called

| once (except the `trainable` attribute).

| When a popular kwarg `input_shape` is passed, then keras will create

| an input layer to insert before the current layer. This can be treated

| equivalent to explicitly defining an `InputLayer`.

|

| Example:

|

| >>> # Create a `Sequential` model and add a Dense layer as the first layer.

| >>> model = tf.keras.models.Sequential()

| >>> model.add(tf.keras.Input(shape=(16,)))

| >>> model.add(tf.keras.layers.Dense(32, activation='relu'))

| >>> # Now the model will take as input arrays of shape (None, 16)

| >>> # and output arrays of shape (None, 32).

| >>> # Note that after the first layer, you don't need to specify

| >>> # the size of the input anymore:

| >>> model.add(tf.keras.layers.Dense(32))

| >>> model.output_shape

| (None, 32)

|

| Args:

| units: Positive integer, dimensionality of the output space.

| activation: Activation function to use.

| If you don't specify anything, no activation is applied

| (ie. "linear" activation: `a(x) = x`).

| use_bias: Boolean, whether the layer uses a bias vector.

| kernel_initializer: Initializer for the `kernel` weights matrix.

| bias_initializer: Initializer for the bias vector.

| kernel_regularizer: Regularizer function applied to

| the `kernel` weights matrix.

| bias_regularizer: Regularizer function applied to the bias vector.

| activity_regularizer: Regularizer function applied to

| the output of the layer (its "activation").

| kernel_constraint: Constraint function applied to

| the `kernel` weights matrix.

| bias_constraint: Constraint function applied to the bias vector.

|

| Input shape:

| N-D tensor with shape: `(batch_size, ..., input_dim)`.

| The most common situation would be

| a 2D input with shape `(batch_size, input_dim)`.

|

| Output shape:

| N-D tensor with shape: `(batch_size, ..., units)`.

| For instance, for a 2D input with shape `(batch_size, input_dim)`,

| the output would have shape `(batch_size, units)`.

|

| Method resolution order:

| Dense

| tensorflow.python.keras.engine.base_layer.Layer

| tensorflow.python.module.module.Module

| tensorflow.python.training.tracking.tracking.AutoTrackable

| tensorflow.python.training.tracking.base.Trackable

| tensorflow.python.keras.utils.version_utils.LayerVersionSelector

| builtins.object

|

| Methods defined here:

|

| __init__(self, units, activation=None, use_bias=True, kernel_initializer='glorot_uniform', bias_initializer='zeros', kernel_regularizer=None, bias_regularizer=None, activity_regularizer=None, kernel_constraint=None, bias_constraint=None, **kwargs)

|

| build(self, input_shape)

| Creates the variables of the layer (optional, for subclass implementers).

|

| This is a method that implementers of subclasses of `Layer` or `Model`

| can override if they need a state-creation step in-between

| layer instantiation and layer call.

|

| This is typically used to create the weights of `Layer` subclasses.

|

| Args:

| input_shape: Instance of `TensorShape`, or list of instances of

| `TensorShape` if the layer expects a list of inputs

| (one instance per input).

|

| call(self, inputs)

| This is where the layer's logic lives.

|

| Note here that `call()` method in `tf.keras` is little bit different

| from `keras` API. In `keras` API, you can pass support masking for

| layers as additional arguments. Whereas `tf.keras` has `compute_mask()`

| method to support masking.

|

| Args:

| inputs: Input tensor, or dict/list/tuple of input tensors.

| The first positional `inputs` argument is subject to special rules:

| - `inputs` must be explicitly passed. A layer cannot have zero

| arguments, and `inputs` cannot be provided via the default value

| of a keyword argument.

| - NumPy array or Python scalar values in `inputs` get cast as tensors.

| - Keras mask metadata is only collected from `inputs`.

| - Layers are built (`build(input_shape)` method)

| using shape info from `inputs` only.

| - `input_spec` compatibility is only checked against `inputs`.

| - Mixed precision input casting is only applied to `inputs`.

| If a layer has tensor arguments in `*args` or `**kwargs`, their

| casting behavior in mixed precision should be handled manually.

| - The SavedModel input specification is generated using `inputs` only.

| - Integration with various ecosystem packages like TFMOT, TFLite,

| TF.js, etc is only supported for `inputs` and not for tensors in

| positional and keyword arguments.

| *args: Additional positional arguments. May contain tensors, although

| this is not recommended, for the reasons above.

| **kwargs: Additional keyword arguments. May contain tensors, although

| this is not recommended, for the reasons above.

| The following optional keyword arguments are reserved:

| - `training`: Boolean scalar tensor of Python boolean indicating

| whether the `call` is meant for training or inference.

| - `mask`: Boolean input mask. If the layer's `call()` method takes a

| `mask` argument, its default value will be set to the mask generated

| for `inputs` by the previous layer (if `input` did come from a layer

| that generated a corresponding mask, i.e. if it came from a Keras

| layer with masking support).

|

| Returns:

| A tensor or list/tuple of tensors.

|

| compute_output_shape(self, input_shape)

| Computes the output shape of the layer.

|

| If the layer has not been built, this method will call `build` on the

| layer. This assumes that the layer will later be used with inputs that

| match the input shape provided here.

|

| Args:

| input_shape: Shape tuple (tuple of integers)

| or list of shape tuples (one per output tensor of the layer).

| Shape tuples can include None for free dimensions,

| instead of an integer.

|

| Returns:

| An input shape tuple.

|

| get_config(self)

| Returns the config of the layer.

|

| A layer config is a Python dictionary (serializable)

| containing the configuration of a layer.

| The same layer can be reinstantiated later

| (without its trained weights) from this configuration.

|

| The config of a layer does not include connectivity

| information, nor the layer class name. These are handled

| by `Network` (one layer of abstraction above).

|

| Note that `get_config()` does not guarantee to return a fresh copy of dict

| every time it is called. The callers should make a copy of the returned dict

| if they want to modify it.

|

| Returns:

| Python dictionary.

|

| ----------------------------------------------------------------------

| Methods inherited from tensorflow.python.keras.engine.base_layer.Layer:

|

| __call__(self, *args, **kwargs)

| Wraps `call`, applying pre- and post-processing steps.

|

| Args:

| *args: Positional arguments to be passed to `self.call`.

| **kwargs: Keyword arguments to be passed to `self.call`.

|

| Returns:

| Output tensor(s).

|

| Note:

| - The following optional keyword arguments are reserved for specific uses:

| * `training`: Boolean scalar tensor of Python boolean indicating

| whether the `call` is meant for training or inference.

| * `mask`: Boolean input mask.

| - If the layer's `call` method takes a `mask` argument (as some Keras

| layers do), its default value will be set to the mask generated

| for `inputs` by the previous layer (if `input` did come from

| a layer that generated a corresponding mask, i.e. if it came from

| a Keras layer with masking support.

| - If the layer is not built, the method will call `build`.

|

| Raises:

| ValueError: if the layer's `call` method returns None (an invalid value).

| RuntimeError: if `super().__init__()` was not called in the constructor.

|

| __delattr__(self, name)

| Implement delattr(self, name).

|

| __getstate__(self)

|

| __setattr__(self, name, value)

| Support self.foo = trackable syntax.

|

| __setstate__(self, state)

|

| add_loss(self, losses, **kwargs)

| Add loss tensor(s), potentially dependent on layer inputs.

|

| Some losses (for instance, activity regularization losses) may be dependent

| on the inputs passed when calling a layer. Hence, when reusing the same

| layer on different inputs `a` and `b`, some entries in `layer.losses` may

| be dependent on `a` and some on `b`. This method automatically keeps track

| of dependencies.

|

| This method can be used inside a subclassed layer or model's `call`

| function, in which case `losses` should be a Tensor or list of Tensors.

|

| Example:

|

| ```python

| class MyLayer(tf.keras.layers.Layer):

| def call(self, inputs):

| self.add_loss(tf.abs(tf.reduce_mean(inputs)))

| return inputs

| ```

|

| This method can also be called directly on a Functional Model during

| construction. In this case, any loss Tensors passed to this Model must

| be symbolic and be able to be traced back to the model's `Input`s. These

| losses become part of the model's topology and are tracked in `get_config`.

|

| Example:

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| # Activity regularization.

| model.add_loss(tf.abs(tf.reduce_mean(x)))

| ```

|

| If this is not the case for your loss (if, for example, your loss references

| a `Variable` of one of the model's layers), you can wrap your loss in a

| zero-argument lambda. These losses are not tracked as part of the model's

| topology since they can't be serialized.

|

| Example:

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| d = tf.keras.layers.Dense(10)

| x = d(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| # Weight regularization.

| model.add_loss(lambda: tf.reduce_mean(d.kernel))

| ```

|

| Args:

| losses: Loss tensor, or list/tuple of tensors. Rather than tensors, losses

| may also be zero-argument callables which create a loss tensor.

| **kwargs: Additional keyword arguments for backward compatibility.

| Accepted values:

| inputs - Deprecated, will be automatically inferred.

|

| add_metric(self, value, name=None, **kwargs)

| Adds metric tensor to the layer.

|

| This method can be used inside the `call()` method of a subclassed layer

| or model.

|

| ```python

| class MyMetricLayer(tf.keras.layers.Layer):

| def __init__(self):

| super(MyMetricLayer, self).__init__(name='my_metric_layer')

| self.mean = tf.keras.metrics.Mean(name='metric_1')

|

| def call(self, inputs):

| self.add_metric(self.mean(inputs))

| self.add_metric(tf.reduce_sum(inputs), name='metric_2')

| return inputs

| ```

|

| This method can also be called directly on a Functional Model during

| construction. In this case, any tensor passed to this Model must

| be symbolic and be able to be traced back to the model's `Input`s. These

| metrics become part of the model's topology and are tracked when you

| save the model via `save()`.

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| model.add_metric(math_ops.reduce_sum(x), name='metric_1')

| ```

|

| Note: Calling `add_metric()` with the result of a metric object on a

| Functional Model, as shown in the example below, is not supported. This is

| because we cannot trace the metric result tensor back to the model's inputs.

|

| ```python

| inputs = tf.keras.Input(shape=(10,))

| x = tf.keras.layers.Dense(10)(inputs)

| outputs = tf.keras.layers.Dense(1)(x)

| model = tf.keras.Model(inputs, outputs)

| model.add_metric(tf.keras.metrics.Mean()(x), name='metric_1')

| ```

|

| Args:

| value: Metric tensor.

| name: String metric name.

| **kwargs: Additional keyword arguments for backward compatibility.

| Accepted values:

| `aggregation` - When the `value` tensor provided is not the result of

| calling a `keras.Metric` instance, it will be aggregated by default

| using a `keras.Metric.Mean`.

|

| add_update(self, updates, inputs=None)

| Add update op(s), potentially dependent on layer inputs.

|

| Weight updates (for instance, the updates of the moving mean and variance

| in a BatchNormalization layer) may be dependent on the inputs passed

| when calling a layer. Hence, when reusing the same layer on

| different inputs `a` and `b`, some entries in `layer.updates` may be

| dependent on `a` and some on `b`. This method automatically keeps track

| of dependencies.

|

| This call is ignored when eager execution is enabled (in that case, variable

| updates are run on the fly and thus do not need to be tracked for later

| execution).

|

| Args:

| updates: Update op, or list/tuple of update ops, or zero-arg callable

| that returns an update op. A zero-arg callable should be passed in

| order to disable running the updates by setting `trainable=False`

| on this Layer, when executing in Eager mode.

| inputs: Deprecated, will be automatically inferred.

|

| add_variable(self, *args, **kwargs)

| Deprecated, do NOT use! Alias for `add_weight`.

|

| add_weight(self, name=None, shape=None, dtype=None, initializer=None, regularizer=None, trainable=None, constraint=None, use_resource=None, synchronization=<VariableSynchronization.AUTO: 0>, aggregation=<VariableAggregation.NONE: 0>, **kwargs)

| Adds a new variable to the layer.

|